Real-time voice intelligence for every application

Remarkably human-like personalities powered by our empathic voice-to-voice model.

Kora

Stella

Dacher

Whimsy

Aura

Ito

Dacher

Whimsy

Kora

Stella

Dacher

Whimsy

Aura

Expressivity

Femininity

Speed

Pitch

Accent

Extroversion

Raspiness

Formality

Rhythm

Enunciation

Expressivity

Femininity

Speed

Hume LLM

Web Search

Tool Use

External LLM

Custom LLM

TTS Injection

Hume LLM

Web Search

Tool Use

External LLM

Custom LLM

TTS Injection

Hume LLM

NPC

Assistant

Coach

Agent

Tutor

Clinician

App UI

NPC

Assistant

Coach

Twilio

Typescript

Python

React

API

Twilio

Typescript

Python

React

Trusted By

Flagship Model: EVI 2

Our latest voice-to-voice model converses rapidly and fluently with users, understands users' tone of voice, and generates the right tone of voice. Capable of emulating a wide range of personalities, accents, and speaking styles, it can be tailored to each application and user.

Our model capabilities

Multimodal emotional intelligence

EVI 2 merges language and voice into a single model trained specifically for emotional intelligence, enabling it to emphasize the right words, laugh or sigh at appropriate times, and much more, guided by prompting to suit your use case.

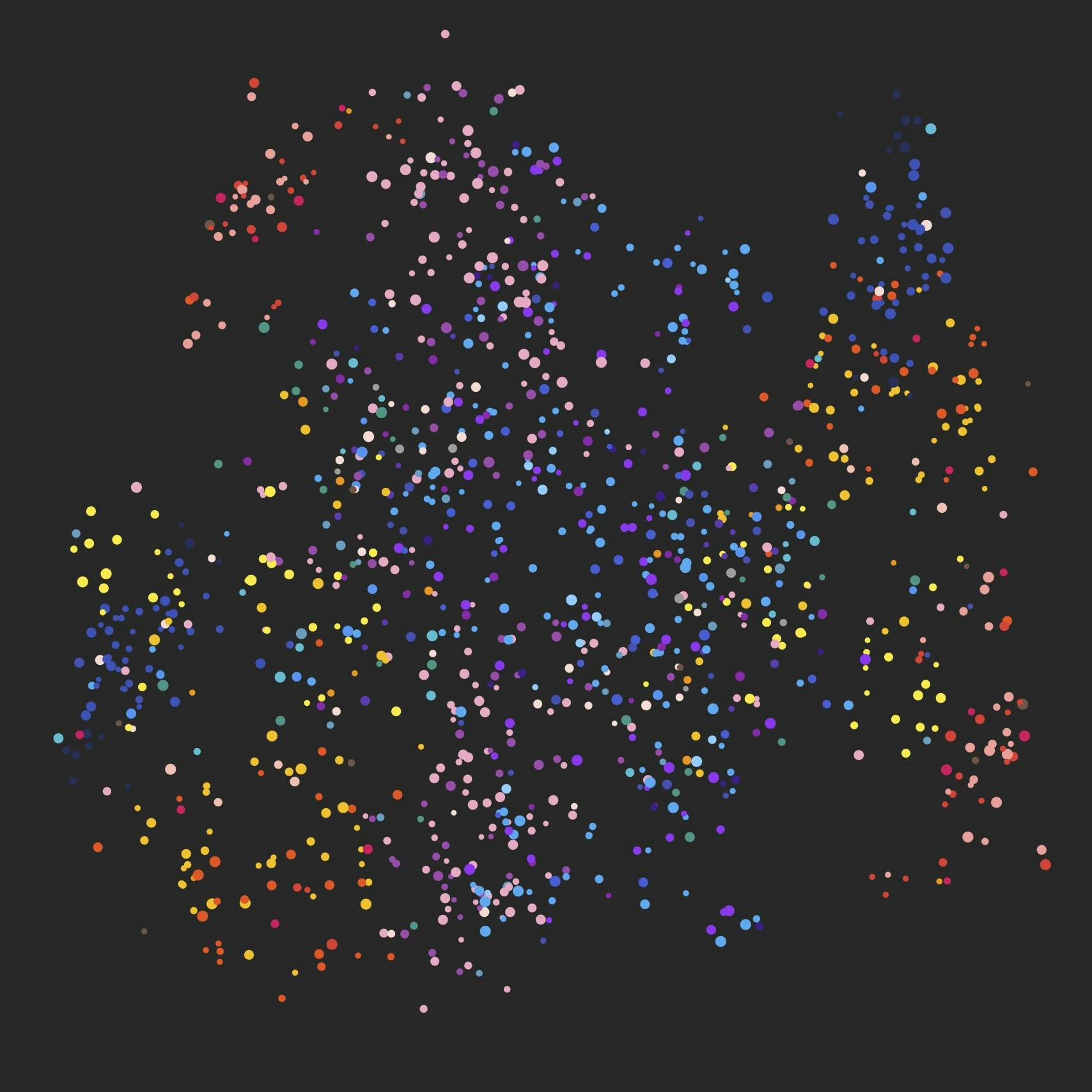

Voice customization without the risks

Create synthetic voices unique to any app or user, without voice cloning. Our novel approach lets you modulate EVI 2’s voice along dimensions like timbre, pitch, nasality, perceived gender, and more, then control its tone and speaking style with your prompt.

Support for any LLM and tool

Let EVI 2 generate all the language or integrate any external LLM or tool. EVI 2 will seamlessly incorporate any output into the conversation without sacrificing on expressiveness, personality, speaking style, or instruction-following capabilities.

Explore our capabilities

Compelling personalities (Aura) with EVI 2

"Hey Aura..."

Empathically expressive speech with EVI 2

"I’m launching something I'm excited about…"

Compelling personalities (Whimsy) with EVI 2

"Hey Whimsy..."

Rapping on command with EVI 2

"Can you freestyle rap about yourself?"

Prompting rate of speech with EVI 2

"Can you speak faster from now on?"

Nonverbal vocalizations with EVI 2

"Could you laugh maniacally for us?"

Inventing new vocal expressions with EVI 2

"Now can you make a sound of joy and enthusiasm?"

Emergent multilingual capabilities with EVI 2

"Can you speak Spanish?"

Compelling personalities (Stella) with EVI 2

"Hey Stella..."

00/00

Empathic Voice Interface Pricing

EVI 2 (Beta)

$0.072 / min

Improved transcription, language modeling, and speech generation by a single voice-to-voice model with better empathic responses

Extensive voice customization with 7 base voices, adjustable parameters, and language-based voice prompting

Faster performance with latency of 500ms - 800ms

Support for English with more languages coming soon

EVI 1

$0.102 / min (Legacy)

Transcription, language modeling, and text-to-speech coordinated across models to generate empathic responses

Customizable voice options with 3 base voices

Latency range of 900ms - 2000ms

English language support only

Measure emotional expression with unmatched precision

One API, four modalities, hundreds of dimensions of emotional expression.

Voice models

Voice models

Image & video models

Speech Prosody

Image & video models

Voice models

Image & video models

Speech Prosody

Voice models

Image & video models

Speech Prosody

Voice models

Image & video models

Our models are built on 10+ years of research, millions of proprietary data points, and over 40 publications in leading journals.

Try in PlaygroundFrom cutting edge research to proven applications

Supported use cases

Health & Wellness

Social Networks

Education & Coaching

AI Research

Creative Tools

Financial Analysis

Empathic AI companions

How Dot uses Hume’s API for emotional intelligence

Media analytics

How Hume's API helps boost audience growth

Interactive EdTech

How Hume's API enables empathic language toys

Preventative healthcare

How Hume's EVI bridges the gap between appointments

Developer Resources

Developer Platform

Create your Hume account, get your API keys, monitor your usage, and explore our products in the interactive platform.

Developer Documentation

Explore our documentation with concise guides, hands-on tutorials, and an in-depth API reference—crafted to support your integration.

Developer Community

Join our community of developers and researchers working with Hume APIs—your go-to hub for collaboration, support, and knowledge sharing.

00/00